asam salim MCMI ChMC

chartered management consultant

AI & Data + Digital Transformation Advisor + Mentor

London

United Kingdom

[email protected]

What I do

I provide advisory, implementation, and mentoring support across digital, data, and AI transformation, helping organisations and individuals turn ambition into practical, sustained outcomes.Digital, Data & AI Transformation Advisory

I share my vast experience of working with senior leaders to shape direction, make informed decisions, and navigate complexity across digital, data, and AI initiatives. This includes strategy, operating models, governance, and critical decision points where experience and judgement matter most.

I can advise on the strength of your business case in realising your strategic priorities and provide a view on time to value outcomes.Implementation & Delivery Leadership

I support organisations in turning strategy into reality by leading and shaping delivery across complex programmes. This includes hands-on leadership, team mobilisation, and embedding capability to ensure solutions are adopted and deliver tangible business value. I provide experienced, pragmatic advice at critical points, helping leaders assess delivery risk, make informed trade-offs, and improve the likelihood of success under tight timelines and real-world constraints.Mentoring for Early and Mid-Career Professionals

I mentor graduates, early, and mid-career professionals who are working towards or already operating in business, consulting, and transformation roles. This includes individuals transitioning into transformation work as well as those developing confidence and judgement within complex delivery environments. My focus is on building practical capability, sound judgement, and the ability to operate effectively with senior stakeholders, drawing on real leadership and delivery experience rather than theory.If you’re exploring digital, data, or AI transformation, or looking for experienced advisory or mentoring support, feel free to reach out for a conversation.

Get in touch!

My experience centres on shaping strategy, leading multi-disciplinary programmes, and enabling sustained adoption that delivers tangible business value. The table below summarises the areas where I consistently add value, followed by selected examples that illustrate this in practice.

| Experience Area | Business Value Delivered |

|---|---|

| AI Transformation & Adoption | Enabled organisations to move beyond isolated pilots to scalable AI adoption, improving productivity, delivery efficiency, and decision-making. |

| Digital & Data Transformation | Designed and delivered transformation programmes that improved cost efficiency, modernised operating models, and embedded data-driven ways of working. Programme & Portfolio Leadership Led complex, multi-disciplinary programmes with clear governance, pace, and accountability, ensuring alignment between strategy and delivery. |

| Workforce Enablement & Capability Building | Built practical learning and enablement approaches that supported real adoption, not just training completion. |

| Regulated & Secure Environments | Delivered innovation and transformation within highly regulated sectors, balancing governance, risk, and security with meaningful progress. |

AI Adoption at Scale in a Global Consulting Environment

Within a large consulting organisation with fragmented AI initiatives, I led the shift from experimentation to consistent, role-based adoption. Alongside aligning leadership intent, delivery workflows, and workforce enablement, I leveraged senior relationships across hyperscaler ecosystems, including Microsoft, to accelerate capability, access expertise, and unblock delivery. This resulted in sustained AI usage, improved productivity, and a repeatable adoption model aligned to both organisational ambition and platform maturity.Data Engineering and Digital Dashboards in a Regulated Environment

Within a highly regulated nuclear decommissioning context, I led the delivery of end-to-end data capabilities spanning data engineering, orchestration, and reporting. This included building reliable data pipelines and digital dashboards that transformed fragmented operational data into trusted, decision-ready insights. A strong focus was placed on usage and adoption, ensuring data products were embedded into day-to-day management and governance processes to support intelligent, data-led decision-making without increasing risk.Programme Leadership Delivering Measurable Business Outcomes

As Programme Director and transformation lead, I led multi-disciplinary teams across complex portfolios, providing clarity, structure, and momentum. This resulted in tangible improvements in cost efficiency, process performance, and the organisation’s ability to adopt new digital and AI capabilities at pace.Mentoring Future Transformation Leaders

Alongside senior advisory and delivery roles, I have mentored graduates and early- to mid-career professionals working towards or operating in business, consulting, and transformation roles. This has included supporting individuals entering the profession as well as those stepping into greater responsibility within complex programmes. My focus is on developing practical judgement, confidence, and the ability to operate effectively with senior stakeholders, helping people translate theory into real-world impact and grow into trusted transformation practitioners.

Finally, don’t confuse explanation with reassurance. Explaining complexity often feels helpful, but at senior levels it can read as uncertainty. Confidence comes from stating outcomes plainly, supported by a small number of facts or metrics.The lesson is simple but uncomfortable: doing the work is not enough. To create impact, professionals must learn to translate effort into crisp outcomes, close topics decisively, and focus relentlessly on what leaders need to say next.Clarity, not completeness, is what moves organisations forward.

latest

FEBRUARY 15, 2026

AI ADVANTAGE STARTS WITH OPERATIONAL CLARITY

Most organisations are not short of AI ambition. They are short of disciplined scoping.In boardrooms, conversations about AI often begin at the level of transformation. Entire operating models are reconsidered. New products are imagined. Technology stacks are debated. Yet the most successful AI programmes rarely start there. They begin with a far more grounded exercise: examining the everyday work of the business with structured curiosity.A practical starting point is to select a single business domain and inventory its recurring tasks. Document them systematically: task name, description, frequency, time spent, and an initial view on automation potential. This discipline reframes AI from an abstract capability to an operational lens. It forces leaders to confront how work is actually performed rather than how it is assumed to function.The next step is evaluation. Three criteria tend to separate viable AI opportunities from speculative ones. First, Repetitiveness: can the task be standardised? Second, Data availability: is the required information accessible and structured? Third, Solution Readiness: do mature tools already exist to address this use case? Scoring tasks against these dimensions introduces rigour and surfaces what might be called AI-ready zones within the organisation.⸻Case Study 1: Finance Reporting – From Assembly to InsightIn many organisations, monthly management packs are assembled manually from multiple systems. Analysts extract data, reconcile discrepancies, format slides, and draft commentary under time pressure. The process is repetitive, structured, and data-rich.By embedding AI within the reporting workflow through automated data pipelines, anomaly detection models, and first-draft narrative generation, the organisation reduces manual preparation effort. Analysts move from assembling numbers to interpreting them.The result is a shorter reporting cycle, more consistent variance analysis, and leadership insight that is both timelier and more forward-looking.⸻Case Study 2: Recruitment Operations – Scaling Speed and ConsistencyHigh application volumes create bottlenecks in CV screening and early-stage assessments. Processes vary across regions. Response times lengthen. Candidate experience deteriorates.By integrating AI-enabled assessments and structured screening tools at the front end of recruitment, candidates are evaluated against predefined competencies before recruiter review. Automation handles repetitive filtering, while humans focus on judgement-intensive stages.The outcome is faster time-to-hire, greater consistency in evaluation, and improved allocation of recruiter capacity toward strategic workforce planning.Prioritisation then follows naturally. Opportunities should be plotted against impact and feasibility. High-impact, high-feasibility initiatives become candidates for immediate experimentation.

The lesson is straightforward. AI advantage does not emerge from sweeping declarations of transformation. It emerges from structured examination of work, disciplined prioritisation, and precise integration into workflows where intelligence compounds operational value.

FEBRUARY 10, 2026

CASE STUDY: BUILDNG A TRUSTED AI CAPABILITY IN A CHANGING CONSULTING MARKET

Market Context: Structural Disruption, Not Incremental ChangeThe Consulting market is undergoing a structural shift. Traditional pyramid models, built on high leverage and standardised delivery, are giving way to diamond-shaped structures that rely on fewer junior roles and a higher concentration of specialist expertise under a flatter management structure. At the same time, generative AI is reshaping how analysis, insight generation, and delivery artefacts are produced, fundamentally changing the economics of consulting work that is of value to clients.Clients are accelerating this shift. Expectations for innovation continue to rise, while tolerance for cost inflation declines. Buyers increasingly demand faster outcomes, demonstrable value, and commercial models aligned to results rather than effort. Niche, AI-native firms are entering the market with focused offerings and lower cost bases, disrupting traditional time-based delivery models.The Organisational ChallengeWithin one large professional services organisation, generative AI adoption emerged rapidly and organically. Individual experimentation delivered early productivity gains, but usage was fragmented. Tools, quality standards, and risk controls varied, creating inconsistency at a time when clients expected advisors to demonstrate credible, responsible AI capability as a baseline.Leadership recognised that AI needed to be embedded as a core consulting capability, aligned to a more specialist, value-driven operating model.The Approach: Redesigning How Consulting Work Gets DoneThe programme went beyond tool adoption to re-examine core consulting processes. Delivery activities were identified, challenged, and redesigned to determine where AI could materially change how work was performed. This included areas such as research and analysis, risk management, project planning, documentation, and quality assurance.AI was deliberately applied to compress delivery timelines, converting activities that previously took months into weeks by accelerating insight generation, standardising outputs, and reducing rework. In risk management, AI-supported review and classification improved consistency while reducing manual effort. In project delivery, AI-enabled artefact creation and decision support increased speed without compromising quality.A unified tooling strategy aligned to existing workflows introduced enterprise-grade security and governance. Clear AI objectives aligned learning, application, and output quality across teams. Capability development focused on depth, with structured upskilling pathways, targeted certification, and an intensive data and AI learning pilot enabling consultants to apply AI directly in specialist roles.Practical enablement reinforced adoption. Proven use cases with repeatable productivity gains were prioritised and shared through targeted knowledge transfer, supported by a central internal AI hub providing standards, guidance, and examples.

Outcomes and Strategic ImplicationsThe results were sustained and scalable. Consultants delivered work faster, with greater confidence and consistency, and AI-enabled efficiencies became repeatable across engagements. The learning pilot was approved for wider rollout, certification delivered cost efficiencies through strategic partnerships, and an AI Charter formalised principles for safe and responsible use.Most importantly, the programme established a durable foundation. AI now drives systematic improvements in operational efficiency and personal productivity, while serving as the baseline for developing innovative, value-based products and services. The foundations are set.

FEBRUARY 06, 2026

WHERE AUTOMATION WITH AGENTIC AI

BECOMES A REAL BUSINESS DISRUPTER

Most organisations have now tasted AI through copilots and assistants. Productivity has improved at the margins, but the underlying operating model remains unchanged. Agentic AI is different. It is not about helping people do the same work faster. It is about changing who or what does the work in the first place.At its core, Agentic AI introduces autonomous or semi-autonomous agents that can execute tasks across systems, decisions, and workflows. The disruption happens when these agents move beyond isolated tasks and start owning outcomes.Across industries, the pattern is already clear. In financial services, agents are beginning to manage onboarding, reconciliation, and compliance monitoring end to end. In healthcare, they coordinate scheduling, documentation, and supply chains across fragmented systems. In manufacturing and energy, agents diagnose issues, trigger maintenance, and rebalance plans in real time. In corporate functions, finance, HR, and IT agents quietly take over repetitive, rules-heavy processes that previously consumed human capacity.What makes Agentic AI difficult is not the technology. The real work sits in governance, data readiness, workforce trust, and redesigning processes that were never built for autonomous execution. Adding agents on top of broken workflows simply accelerates failure.

Before deploying agents at scale, organisations should pause and apply a simple checklist:

• Start with outcomes, not agents - Be explicit about the business result you want to change and how success will be measured.

•** Fix the process first** - If a workflow is inconsistent, manual, or poorly governed, agents will amplify its flaws.

• Define human–agent boundaries - Decide where agents act independently, where they assist, and where humans retain final authority.

• Design for trust and adoption - Transparency, auditability, and explainability matter as much as speed and autonomy.

• Build governance in from day one - Compliance, security, and data sovereignty cannot be retrofitted later.

• Plan for scale, not pilots - If an agent cannot be industrialised, supported, and evolved, it will remain a demo.Agentic AI is not another tool rollout. It is a structural shift in how work gets done. The organisations that succeed will be those that treat agents as part of a new operating model, not just a faster way to automate yesterday’s processes.

FEBRUARY 02, 2026

WHAT MICROSOFT'S "FRONTIER FIRM" GETS RIGHT AND WHAT IT LEAVES OUT

The Frontier Firm is Microsoft’s way of describing a next-generation operating model for organisations adopting AI at scale. In Microsoft’s framing, Frontier Firms are built around on-demand intelligence, where humans and AI agents work together to deliver outcomes faster, with greater agility and at lower marginal cost.As a concept, it’s helpful. It gives leaders a way to visualise what “AI transformation” might actually look like beyond pilots and isolated use cases.According to Microsoft, Frontier Firms evolve across four dimensions: Enriching Employee Experience, Reinventing Customer Engagement, Reshaping Business Processes, and Accelerating Innovation. In practice, this means AI agents handling routine execution, while people focus on judgement, direction, escalation, and creativity.We can already see early frontier behaviours across sectors. Financial services firms are using agents to handle internal policy and compliance queries. Retailers are deploying AI to personalise post-purchase engagement at scale. Professional services organisations are automating time capture, document synthesis, and knowledge retrieval. Manufacturing and engineering firms are using agents to prioritise leads and support complex sales cycles.Underpinning all of this is a strong platform story. Enterprise data, surfaced through tools like Microsoft Graph and Copilot, lowers the barrier to insight. Low-code and pro-code platforms democratise who can build solutions. In theory, intelligence becomes accessible to everyone, not just specialists.But this is where the Frontier Firm narrative risks oversimplification.Technology alone does not create a new operating model. Agents don’t magically align themselves to business priorities. Data doesn’t organise itself. Governance doesn’t emerge by accident. The organisations that struggle are rarely blocked by models or tools. They’re blocked by programme discipline.Becoming a Frontier Firm requires sustained effort: clear ownership of use cases, strong data foundations, security and compliance embedded from day one, and cross-functional teams that include business leaders, IT, data, legal, and vendors working together. It also requires sequencing. Most organisations will operate across multiple maturity levels at once, not follow a neat, linear journey.In that sense, the Frontier Firm is best understood as a North Star, not a destination you buy your way into. It helps leaders imagine a future state. Getting there still demands hard, unglamorous work.

Key takeaways• The Frontier Firm is a vision for an AI-enabled operating model, not a product or programme.

• Data and platforms democratise intelligence, but people and governance determine impact.

• AI agents amplify execution; humans remain accountable for outcomes.

• Strong programme management and cross-functional collaboration are the real differentiators.

• The vision is useful, but the journey is long, uneven, and very real.The Frontier Firm helps organisations see what’s possible. Becoming one is still a choice, and a commitment.

JANUARY 29, 2026

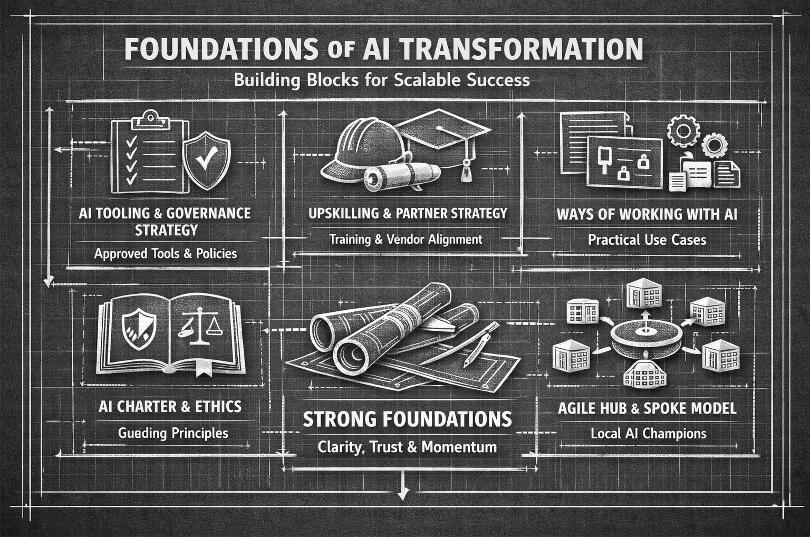

AN ACTUAL BLUEPRINT FOR AI TRANSFORMATION

Most AI transformations fail early, not because the technology underperforms, but because organisations skip the foundation stage. Tools are introduced before direction is clear, pilots multiply without coherence, and confidence erodes before value is realised.Across industries, effective AI programmes tend to establish a small number of foundational artefacts. These act as milestones, creating clarity, alignment, and the conditions for responsible, scalable adoption.1. An AI Tooling and Governance Strategy

This artefact defines which AI tools are approved, how they align with security, data protection, and regulatory requirements, and where experimentation is encouraged or constrained. Its purpose is to remove ambiguity and allow teams to focus on applying AI to real work rather than navigating risk alone. The detail will vary by sector, but the outcome should always be confidence and consistency.2. A Firm-Wide Upskilling and Partner Strategy

Strong foundations depend on people capability. A coherent approach to upskilling and vendor partnerships typically includes role-based learning pathways, applied training tied to real workflows, and clear relationships with learning and technology partners. The aim is practical fluency at scale, not deep technical expertise for everyone.3. Defined Ways of Working with AI in Real Scenarios

AI becomes real when it is embedded into everyday activities. Documented ways of working for tasks such as content creation, analysis, documentation, and proposal development provide this bridge. These artefacts clarify where AI adds value, where human judgement applies, and how outputs are reviewed. Early examples do not need to be perfect; they exist to prove value and inform future design.4. An AI Charter as the Backbone

An AI Charter underpins the foundation stage by setting clear principles for responsible use. It should protect employees, enable innovation, and earn trust with clients and partners by defining expectations around transparency, accountability, and human oversight. This turns responsible AI into a practical operating norm rather than an abstract aspiration.5. An Agile Hub-and-Spoke Delivery Model

Finally, many successful programmes establish an agile hub-and-spoke model. A central team provides direction, governance, and shared assets, while local representatives or champions act as points of contact within business units or geographies. This requires sustained coordination and should not be underestimated. Its value lies in creating two-way flow: ideas, feedback, and innovation move upwards, not just instructions moving downwards.

Key takeaway

The foundation stage is not about scale or sophistication. It is about establishing the right artefacts, structures, and relationships to create clarity, trust, and momentum.If you are shaping or reassessing the foundation stage of an AI transformation and want to explore how these artefacts could be tailored to your organisation and industry, I welcome the conversation.

JANUARY 25, 2026

KNIME: THE QUIET SWISS ARMY KNIFE OF DATA AND AI

I first started working with KNIME back in 2015. Since then, I’ve used it across multiple client engagements, particularly in UK central government, and have completed several KNIME certifications along the way. Over that time, one thing has stayed consistent, KNIME continues to quietly deliver value while often being overlooked in favour of louder, more expensive platforms.What KNIME gets right is the idea that innovation does not have to be costly to be effective. Its open-source foundation has democratised access to advanced analytics, data engineering, and now AI. Teams do not need large budgets or complex infrastructure just to get started. That accessibility matters, especially in environments where funding is constrained, governance is critical, and delivery timelines are unforgiving.From a delivery perspective, time to value is one of KNIME’s biggest strengths. I’ve seen meaningful prototypes and production-ready workflows delivered in weeks rather than months. The visual, workflow-based approach makes logic transparent, auditable, and easier to transfer between teams, which is often the difference between a solution that sticks and one that quietly fades away.More recently, KNIME has evolved into much more than a data preparation or analytics tool. With support for machine learning, automation, and agent-based workflows, it has effectively become a Swiss Army knife for data and AI. You can move from ingestion to insight to automated decision-making in a single environment, selecting only the capabilities you need without adding unnecessary complexity.

At a time when innovation strategies often default to the newest or most expensive tooling, KNIME is a reminder that practical, proven platforms still matter. It may not shout the loudest, but it consistently delivers outcomes that organisations actually care about.Key takeaways

• Innovation does not need to be expensive to be effective

• Open-source platforms can deliver enterprise-grade outcomes

• KNIME offers rapid time to value with strong governance and transparency

• It is a versatile data and AI platform that should not be discounted in any tooling or innovation strategy.Here is a link to KNIME - https://www.knime.com. Reach out if you have any questions.

JANUARY 23, 2026

A PRACTICAL VIEW OF GEN AI FOR BUSINESS LEADERS

Generative AI is often framed as complex or experimental. In reality, its value in business is increasingly clear and practical. Data goes in, patterns are analysed, and the system produces outputs that support decisions, accelerate work, or remove friction from everyday processes.At its core, generative AI addresses a problem most organisations already recognise: too much time is spent searching for information, drafting material, and coordinating knowledge across teams. These bottlenecks slow decision-making and dilute expertise. Generative AI reduces that drag.Across sectors, the impact follows a consistent pattern.In professional services, generative AI is already accelerating proposal development, summarising client material, and helping bid teams reuse prior knowledge more effectively. This shortens sales cycles and improves quality without increasing cost.In finance, it reduces manual effort in reporting, forecasting, and risk analysis by synthesising large volumes of information and highlighting key insights. Leaders spend less time preparing information and more time acting on it.In customer operations, it improves service by drafting responses, resolving common queries, and guiding agents in real time. This leads to faster resolution, improved consistency, and lower operational overhead.In operations and manufacturing, generative AI supports planning and decision-making by analysing historical data, identifying patterns, and recommending actions, such as optimising supply chains or anticipating demand shifts.

What differentiates generative AI from traditional automation is flexibility. Instead of being hard-coded for a single task, it works through natural language and can be applied across multiple processes. In practice, most value is realised not through complex models, but by removing everyday bottlenecks in how people access information and make decisions.

JANUARY 21, 2026

WHEN GOOD WORK DOESN'T LAND, AVOIDING THE EXECUTIVE COMMUNICATION TRAP

Many experienced professionals fall into the same trap when working at senior or executive level: assuming that the quality of the work will speak for itself. It rarely does.Executives are not short of intelligence. They are short of time. What they value most is clarity, decisiveness, and outcomes they can confidently repeat to others.One common pitfall is over-indexing on process. Detailed thinking, rigorous analysis, and careful design are essential to delivering real change, but they are not what senior leaders want to consume. They want to know three things: what exists now, why it matters, and what decision is required. Anything beyond that is background noise.Another trap is prioritisation drift. When given autonomy, it’s tempting to pursue initiatives that are interesting, innovative, or intellectually satisfying. While these may create value, they can distract from foundational asks if they are not clearly framed as “additional” or “optional.” Senior stakeholders expect visible progress on their top priorities first, even if that progress is imperfect.Communication format matters as much as content. Long documents, dense slides, and narrative-heavy updates dilute impact. Executive communication should be outcome-led, concise, and easy to relay upwards. If a leader cannot summarise your work in a single sentence, it is unlikely to influence decisions.

Finally, don’t confuse explanation with reassurance. Explaining complexity often feels helpful, but at senior levels it can read as uncertainty. Confidence comes from stating outcomes plainly, supported by a small number of facts or metrics.The lesson is simple but uncomfortable: doing the work is not enough. To create impact, professionals must learn to translate effort into crisp outcomes, close topics decisively, and focus relentlessly on what leaders need to say next.Clarity, not completeness, is what moves organisations forward.

JANUARY 15, 2026

FROM INTENT TO IMPACT: SETTING AI OBJECTIVES THAT ACTUALLY WORK

AI programmes fail in remarkably predictable ways. Lots of enthusiasm, lots of slides, very little change in how work actually gets done. The difference between motion and progress usually comes down to one thing: objectives. Not lofty aspirations, but the kind that quietly reshape behaviour over time.The most effective objectives balance three reinforcing elements.First, Learning. A shared baseline of AI understanding removes uncertainty and levels the playing field. People know what AI is, where it helps, and what “good” looks like. From there, capability deepens by role, allowing expertise to grow without overwhelming everyone on day one.Second, Usage. Learning alone is inert. Objectives must encourage practical application in real work, embedding AI into everyday activities to improve efficiency, clarity, and outcomes. This shifts AI from something people talk about to something they rely on.Third, Quality & Responsibility. Every use of AI is anchored in a clear AI Charter, setting expectations for ethical, secure, and responsible behaviour. This provides guardrails without slowing momentum, ensuring outputs are trustworthy and aligned with organisational values.

Together, these objectives create a simple but powerful loop: learn, apply, improve. They give individuals clarity on where to focus their effort, and leaders confidence that progress is measurable, responsible, and real. Set well, objectives stop being an administrative exercise and start becoming the engine that turns AI intent into lasting impact.

JANUARY 11, 2026

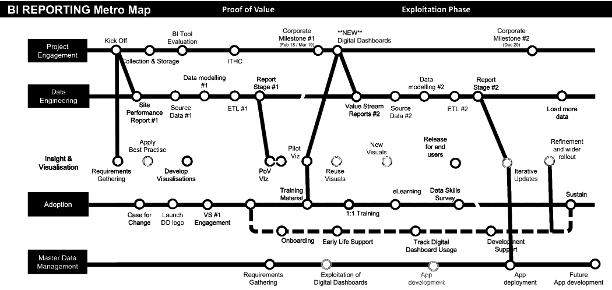

WHY YOUR DATA PROGRAMME NEEDS A METRO MAP

Most data and analytics programmes fail for a very boring reason. Not technology. Not talent. Misalignment. Different stakeholders are mentally riding different trains, heading to different destinations, all convinced they are on the right route.That is where the metro map comes in.A metro map is a deceptively simple way to explain a data and analytics initiative. Instead of long decks or abstract operating models, you show the journey. Lines represent workstreams. Stations represent outcomes. Interchanges show dependencies. Suddenly, everyone can see how the whole system fits together.

What surprised me most was the reaction from senior leaders. Several said they had never seen data programmes explained this way before. And more importantly, they finally understood why certain roles, sequencing, and funding decisions were non-negotiable. When the map shows that you cannot reach “Advanced Analytics” without first stopping at “Data Foundations,” the conversation changes fast.The real value is not the diagram itself. It is what it unlocks.Executives can see why investment is staged rather than front-loaded. Delivery teams can explain why specialist skills are needed at specific points, not everywhere at once. Business leaders can spot where they need to engage, rather than being “consulted” too late. Everyone understands that skipping stations creates derailments later.The metro map also flattens hierarchy. A graduate analyst and a board sponsor can look at the same visual and have a meaningful conversation. That shared language is rare, and powerful.Data and analytics is a team sport played over time. The metro map makes the effort visible, the trade-offs explicit, and the destination believable. Once people can see the journey, they are far more willing to get on the train, and crucially, stay on it.

JANUARY 7, 2026

DEFINE “GOOD” OR DELIVER THE WRONG THING FASTER

In internal AI transformation, the technology is rarely the hardest part. The hardest part is operating in ambiguity while still delivering outcomes people can trust.From my experience, I’ve seen that “progress” only counts when it’s visible. We quietly create a repository of tangible assets (a SharePoint hub, demo videos, bite-sized knowledge content) even before any formal launch. It’s not theatre. It’s usually risk management. When stakeholders are away, priorities shift, or scrutiny appears, evidence beats intent every time.The bigger take-away when I reflect on engagements I have been involved with is language. Senior leaders often ask for things like an “AI factory” or “knowledge management at scale”. Those phrases sound decisive, but they can mean ten different things to ten different people. If you don’t align on what “good” looks like, you can work incredibly hard and still deliver the wrong outcome.My rule is simple: start every conversation with three questions:- What decision do you need?, i.e, “what are you going to sign off, say yes to, or be accountable for?

- What outcome are we measuring?

- When will we agree it’s successful?Once those answers are clear, delivery becomes focused and proportionate. You stop over-engineering. You stop guessing. You stop optimising for reassurance instead of impact. Most importantly, you create a shared contract that protects both the work and the people doing it. Without that contract, even strong delivery can be reframed as misalignment after the fact.The practical takeaway: reduce the noise, define success early, and document your wins. AI programmes move fast. Memory and accountability do not.

If you’re navigating digital, data, or AI transformation and want a sounding board grounded in real delivery experience and senior stakeholder engagement, feel free to reach out.